If we’re being honest, it’s not people who “lie” in reports. It’s the attribution model, tracking limitations, and the way platforms collect and stitch data. The most common mistake is treating last-click as “the truth” and making decisions as if the entire customer journey consisted of a single touchpoint.

Let’s break down where distortions come from, how last click differs from multi-touch, and how to avoid drowning in numbers that look clean but lead you in the wrong direction.

1) What Is Last-Click Attribution (and Why Everyone Uses It)

Last click assigns 100% of the credit for a conversion to the final channel/campaign the user interacted with before purchasing or submitting a lead.

Why businesses love it:

- It’s easy to explain: “the sale came from X.”

- It’s easy to optimize: “cut what doesn’t convert.”

- It’s available almost everywhere by default.

The problem: by definition, last click ignores everything that happened earlier—awareness, warming up, comparison, remarketing, brand search triggers, and so on.

2) Multi-Touch Attribution: What It Is (and Why It Sounds Like “Truth”)

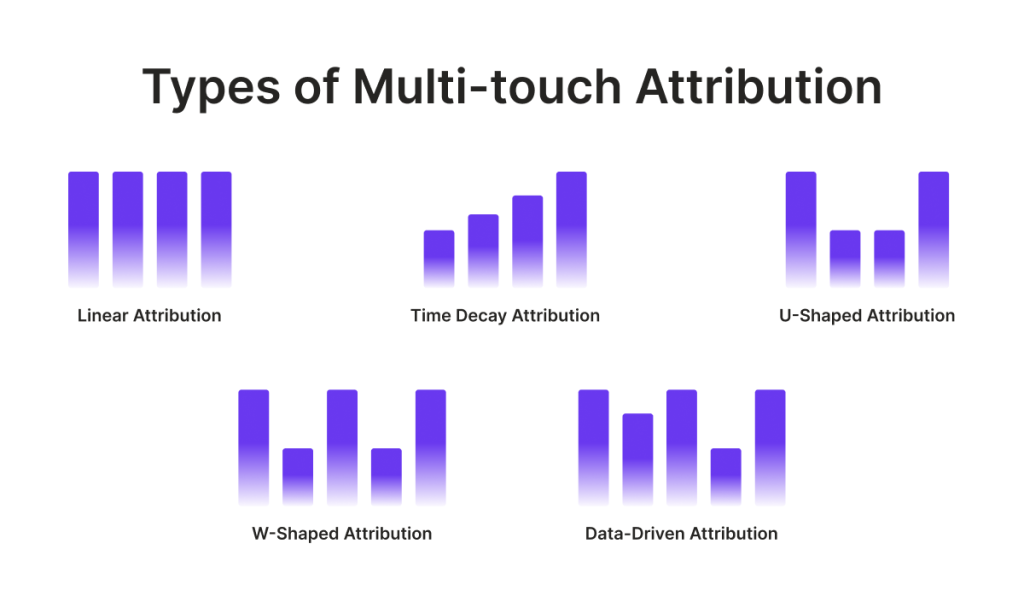

Multi-touch attribution (MTA) tries to distribute conversion value across multiple touchpoints. Common variants include:

- Linear: equal credit to every touchpoint

- Time decay: touchpoints closer to conversion get more weight

- Position-based (U-shaped): more weight to first and last touches, less to the middle

- Data-driven / algorithmic: weights calculated from observed data (a more complex topic on its own)

Why multi-touch feels “more fair”:

- It reflects the real customer journey.

- It shows the role of upper-funnel activity.

- It explains why social/video/content may not “convert directly,” but sales drop when you turn it off.

But multi-touch can “lie” too—just in a different way.

3) The Real Issue: Reports “Lie” Because Models and Data Are Limited

Any attribution approach is a model. It’s not objective truth—it’s a rule for splitting credit when we can’t perfectly observe causality.

Most distortions come from three sources:

A) Incomplete data (privacy, cookies, cross-device)

- User saw an ad on mobile but bought on desktop.

- Some clicks/views aren’t tracked (ad blockers, browser restrictions, iOS limitations).

- Some conversions are “lost” or stitched incorrectly.

Result: last click and multi-touch see different slices of reality and split credit differently.

B) Different attribution windows (7/14/30 days)

The attribution window is how far back a touchpoint can still be counted as influencing a conversion.

- Short windows often exclude upper-funnel touches.

- Long windows increase the chance of crediting touchpoints that weren’t truly causal.

Result: two reports with the same model but different windows can contradict each other.

C) Platform incentives (“judge and jury” problem)

Ad platforms often:

- have more signals inside their own ecosystem,

- model or “fill in” conversions,

- present results aligned with their optimization logic.

Result: platform-reported conversions and your site analytics won’t match—and that’s normal.

4) Where Last Click “Lies” (and How)

4.1) It over-credits bottom-funnel channels

Typical “last-touch winners”:

- branded search

- direct traffic

- remarketing

- email to existing lists

- affiliate/coupon partners

These often appear at the end of the journey. They matter—but last click acts as if they did all the work.

Symptom: “Social/video/content doesn’t convert. Let’s cut it.”

4.2) It punishes channels that create demand

Content, reach, lead magnets, community—these often work as:

- first touch

- warm-up

- objection handling

Last click says: “Thanks, but you get zero.”

Symptom: after cutting upper funnel, sales hold for a bit, then drop because demand isn’t being replenished.

4.3) It breaks down with long sales cycles

The longer the decision cycle (B2B, high-ticket items, subscriptions), the more last click becomes blind to how conversions are actually created.

5) Where Multi-Touch “Lies” (Yes, It Does)

5.1) It can spread credit across noise

If you split credit across 10 touchpoints, you can end up rewarding random or low-impact interactions simply because they happened.

Symptom: everything looks “a little effective,” and it’s unclear what truly drives growth.

5.2) It depends on identity stitching quality

Multi-touch only works if you can reliably say:

- this is the same person,

- these were their touchpoints,

- this is the correct order.

In reality: devices, browsers, missing events, partial modeling—everything adds uncertainty.

Symptom: the model looks smart, but conclusions shift dramatically after tracking changes.

5.3) It can turn into a belief system

Teams start treating “data-driven attribution” as magic. It’s still a model that:

- is only as good as the data it receives,

- optimizes for what’s measurable,

- can drift with seasonality, creative changes, and traffic mix shifts.

6) A Quick Example: Why Reports Disagree

User journey:

- saw a social video

- 3 days later searched the brand and read reviews

- 2 days later clicked a retargeting ad and purchased

How different systems credit it:

- Last click: 100% to retargeting

- Multi-touch (U-shaped): meaningful credit to social + brand search + retargeting

- Social platform report: may claim the conversion via view-through or modeled data

- Website analytics: may not even see the first touch if it was a view without a click

Each report can be “right” within its own rules.

7) What Marketers Should Do (So You Don’t Become a Prisoner of the Model)

7.1) Keep two perspectives at the same time

- Last click helps you see who closes the deal.

- Multi-touch helps you see who creates and warms demand.

If you only look at last click, you cut upper funnel. If you only look at multi-touch, you may blur accountability and make optimization harder.

7.2) Look beyond attributed sales—focus on incrementality

The key question: Does this channel create lift, or does it just capture people who were already going to buy?

Practical ways to get closer to incrementality (without a lab setup):

- geo-based or audience split “on/off” tests

- retargeting holdouts (a portion of the audience doesn’t see ads)

- cohort comparisons before/after changes

- MMM (marketing mix modeling) for larger budgets when MTA isn’t enough

7.3) Analyze branded demand separately

Brand growth often shows up as:

- direct

- organic

- branded search

Last click applauds these channels, even when the real driver is upper-funnel activity.

7.4) Split KPIs by funnel stage

Don’t demand “buy tomorrow” performance from awareness channels:

- Upper funnel: reach, attention, brand search growth, new users

- Mid funnel: visits, engagement, sign-ups, add-to-cart

- Bottom funnel: CPA/ROAS, sales, LTV

8) Quick Self-Check: Where You Might Be Fooling Yourself

- Do you optimize budgets only by last-click ROAS?

- Is retargeting your “best channel” and eating most of the budget?

- Do you treat branded search as your “most profitable source”?

- Did you cut upper funnel and see a drop 2–6 weeks later?

- Do platform conversions look much higher than site analytics—and you can’t explain why?

If you said “yes” to at least two, attribution is already affecting your money.

9) So… Which One Lies More?

- Last click usually “lies” by rewriting the story as if a conversion happened because of one final touchpoint.

- Multi-touch usually “lies” by spreading credit across everything—including noise—and by relying heavily on identity stitching and incomplete data.

Final takeaway: don’t chase a perfect “truth” in attribution. Build a usable decision view where:

- bottom funnel is responsible for closing,

- upper funnel is responsible for creating demand,

- and major budget decisions are validated with incrementality tests—not just prettier weighting schemes.