Programmatic buying has been living in a first-price world for a while: you win, you pay your bid (not “a bit above the second place”). That changes the economics completely. Without smart optimization you either overpay or lose auctions.

In 2025–2026, the most consistently effective approaches fall into four buckets:

- Bid shading (smart bid “discounting”)

- Dynamic bidding (reacting to context + clearing price expectations)

- Geo / time-slot / device adjustments (multipliers on top of a base bid)

- Guardrails (pacing, caps, anomaly controls) so ML doesn’t wreck your economics

Below is a practical guide with simple formulas—no heavy math, but enough to implement.

1) The Foundation: What Are We Optimizing?

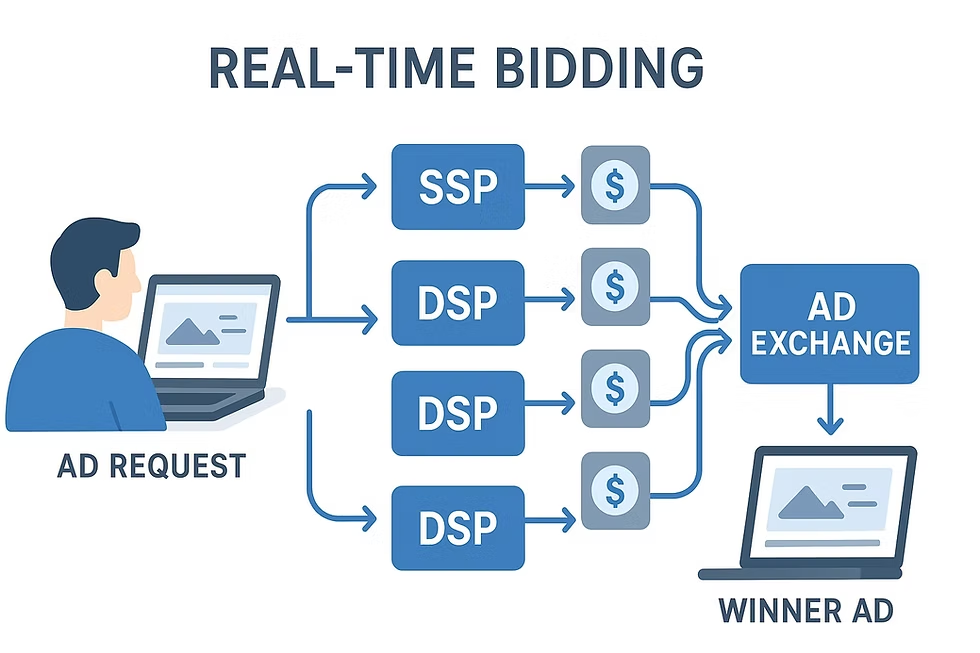

In real time, each impression is a decision: how much to bid to maximize value under constraints (budget, CPA/ROAS, frequency, brand safety, etc.).

A useful mental model:

- Expected value of the impression

- Market winning price (clearing price)

- Overpayment risk (especially in first-price)

- Goals (CPA/ROAS, reach, share-of-voice)

Bid shading is a buy-side technique that reduces your bid in first-price to pay less while keeping win rate and delivery stable.

2) A Simple Base Bid Formula

If you’re performance-driven (conversions / purchases)

Define the “true” value of an impression:

EVimp = P(conv | imp) × Valueconv

Convert to CPM (because you’re bidding CPM):

Bidbase_CPM = 1000 × EVimp × kmargin

Where k_margin is how much of the expected value you’re willing to “spend” in the auction (often ~0.6–0.9 depending on margins and targets).

If you’re brand / reach-driven

You can swap conversion value for contact value, for example:

EVimp = P(viewable) × Valueviewable

or

EVimp = P(incremental_reach) × Valueincrement

Same logic: estimate value first, then turn it into CPM.

3) Bid Shading: “Discount” Smartly Without Losing Volume

The intuition

In first-price, bidding your full value often leads to systematic overpayment. Shading shifts you from “bid my value” to “bid just enough above the expected winning price.”

A practical approach that works almost everywhere

- Compute the base bid:

Bid_base_CPM - Predict the winning price:

CP_hat(from win/loss logs, or exchange/SSP signals where available) - Set the final shaded bid:

Bidshaded = min(Bidbase_CPM, CP_hat + delta)

delta is a small “win cushion” (e.g., +3–10% or a small fixed increment). Tune it by SSP / auction environment.

If you don’t have a clearing-price prediction

Start with a shading factor:

Bidshaded = Bidbase_CPM × s

Where s is typically in the 0.6–0.95 range and adapts over time.

How to adapt s without complex math

Pick a target win rate (or delivery share): WR_target. Every N minutes compare actual win rate:

- If

WR_fact < WR_target→ increases(shade less) - If

WR_fact > WR_target→ decreases(shade more)

Simple, but very stable in production.

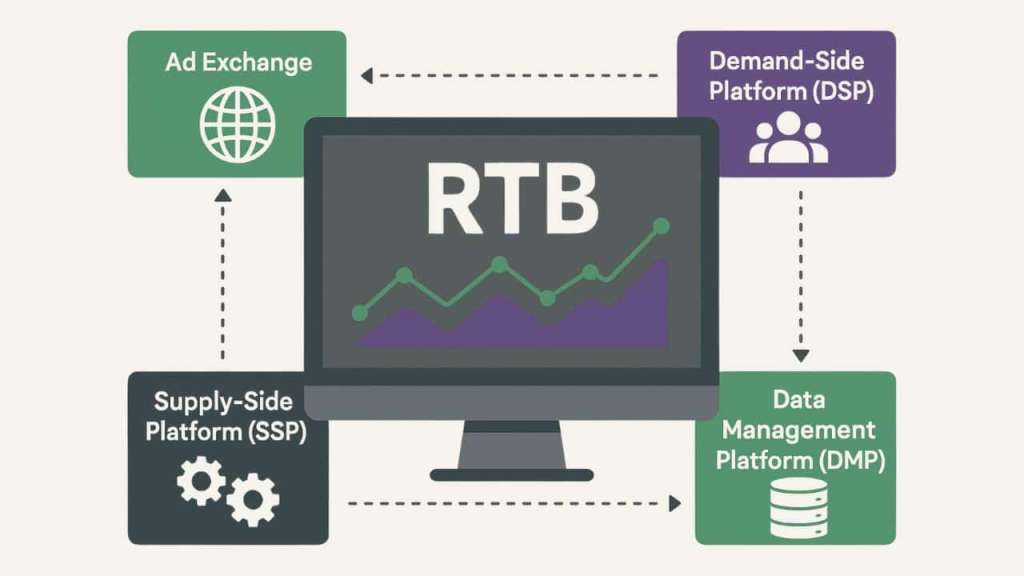

4) Dynamic Bidding: One Price for Everyone Is Dead

Dynamic bidding means the bid changes for every request based on context. In practice, it combines:

- Value prediction (CVR / revenue / expected value)

- Winning-price prediction (

CP_hat) - Constraints (pacing, frequency, caps)

- Business rules (brand safety, blocklists, allowlists)

A “marketing + engineering friendly” mini-formula

Bidfinal = Bidshaded × Mgeo × Mtime × Mdevice × Mquality × Mpacing

Each M_* is a multiplier, typically in the 0.5–1.5 range (sometimes wider for highly polarized segments).

5) Geo / Time-Slot / Device Adjustments (Fastest ROI)

This “simple magic” often outperforms fancy modeling—especially early on.

5.1 Geo multipliers (M_geo)

What reliably works:

- Multipliers by region/city + (optionally) location type signals

- Separate coefficients for new vs returning users

- Optimize on CPA/ROAS or LTV, not just conversion rate

Example:

- High-LTV city:

M_geo = 1.20 - Lower-value regions:

M_geo = 0.85

5.2 Time-slot multipliers (M_time)

Two common modes:

- Sales: boost hours with best

CVRand/orAOV - Awareness: boost hours with best

viewability/ completion

Tip: don’t over-segment into 24 hours. Start with 4–6 blocks (morning/day/evening/night + weekends) to reduce noise.

5.3 Device multipliers (M_device)

Baseline patterns (vary by vertical):

- Mobile web: cheaper, sometimes weaker conversion

- In-app: often higher quality, usually pricier

- Desktop: can win for high-consideration purchases and long forms

In practice, consider multipliers by: device × OS × browser × connection type—but aggregate enough to keep stats stable.

6) Guardrails: Keep “Smart Bidding” from Becoming an Expensive Mistake

Without guardrails, even good logic can:

- burn budget early in the day

- blow up CPA

- over-focus on one “favorite” segment and lose scale

Common 2025–2026 guardrails:

1) Budget pacing

- Spend-per-minute limits

- Adjust

M_pacingdown when ahead of plan

2) Bid caps

Bid_final ≤ Bid_cap_campaign- Optional caps by SSP / format / deal type

3) Data anomaly controls

- If CVR drops sharply or CPM spikes → temporarily freeze multipliers to safe defaults

4) Supply quality checks

- Use supply-path transparency signals (e.g., chain metadata and seller declarations) to reduce low-quality inventory and unnecessary intermediaries

7) Implementation Checklist (Step-by-Step)

- Collect win/loss logs and track: win rate, CPM, CPA/ROAS, spend share by SSP/geo/time/device.

- Introduce a base bid from value (

Bid_base_CPM). - Add basic bid shading: start with

× s, then evolve toCP_hat + delta. - Layer geo/time/device multipliers (start with 5–10 big segments).

- Turn on pacing + caps.

- Review coefficients and supply controls weekly; prune bad paths, reinforce good ones.

8) Common Mistakes (And How to Avoid Them)

- Same shading everywhere → clearing prices differ by SSP and format. At least segment by SSP × format.

- Chasing win rate without CPA/ROAS guardrails → easy to buy volume in low-quality inventory.

- Too many micro-segments → noisy stats; the system starts “believing” randomness.

- No supply-path controls → higher risk of waste and intermediary costs.

Conclusion: What Really Works in Real-Time Bid Optimization (2025–2026)

Real-time bid optimization in 2025–2026 is no longer about simple rule-based bidding. The strongest results come from AI-driven decisioning, first-party data activation, and continuous experimentation. Advertisers who combine automation with human strategy gain scalability, efficiency, and resilience against privacy changes.

The key takeaway: winning bids are contextual, predictive, and value-based — not reactive.

Effective RBO Strategies

| Strategy | Why It Works in 2025–2026 | Best Use Case |

|---|---|---|

| AI & ML-Based Bidding | Predicts conversion value in real time | Large-scale performance campaigns |

| Value-Based Bidding (LTV, ROAS) | Focuses on long-term profitability | E-commerce & subscription models |

| First-Party Data Integration | Bypasses third-party cookie limits | CRM-driven & logged-in users |

| Contextual & Intent Signals | Privacy-safe and highly relevant | Open web & programmatic display |

| Dynamic Creative Optimization (DCO) | Aligns bids with creative relevance | Multi-format & multi-geo campaigns |

| Incrementality Testing | Avoids wasted spend on non-incremental users | Mature ad accounts |

| Human-in-the-Loop Control | Prevents over-automation risks | High-budget or regulated industries |

FAQ: Real-Time Bid Optimization

What is real-time bid optimization (RBO)?

RBO is the process of adjusting ad bids instantly for each impression based on user signals, context, and predicted performance.

Is manual bidding still relevant in 2025–2026?

Manual bidding alone is no longer competitive. However, human oversight is critical for setting constraints, testing hypotheses, and interpreting results.

How does privacy regulation affect RBO?

With reduced access to third-party data, RBO now relies more on first-party data, contextual signals, and modeled conversions.

Which metrics matter most for bid optimization today?

ROAS, lifetime value (LTV), incrementality, and predicted conversion value matter more than CPC or CTR alone.

Can small advertisers use real-time bid optimization effectively?

Yes. Many platforms now offer AI-powered bidding tools that scale well even with modest budgets, provided conversion tracking is clean.

How often should bid strategies be reviewed?

At least weekly for performance checks and monthly for strategic adjustments, especially during seasonality or market shifts.