Testing 5–10 creatives is easy: a couple of ad sets, a few banners, and you can keep everything in your head. But when you start working with dozens or even hundreds of creatives, chaos usually begins: duplicate campaigns, confusing names, unclear results and an ad platform that spends 90% of budget on a single creative while hundreds of others get no delivery at all.

This article gives you a practical creative testing framework that lets you test 100+ creatives without chaos, understand what really works and scale your winners confidently.

1. Strategy First, Creatives Second

The biggest mistake is to generate creatives first and only then think about where to put them. The correct order looks like this:

- Define the goal of the test. What exactly are you looking for: a working angle, a winning format, the best visual + copy combo, or simply validation of an offer?

- Formulate hypotheses. Which audience insights are you testing? Which pains and desires do you touch? Which formats do you want to compare (video vs static, carousel vs single image, UGC vs studio, etc.)?

- Design the campaign structure for testing. How will campaigns, ad sets and ad groups be organized? How will you select winners and shut down losers?

Only after this should you move on to design and production.

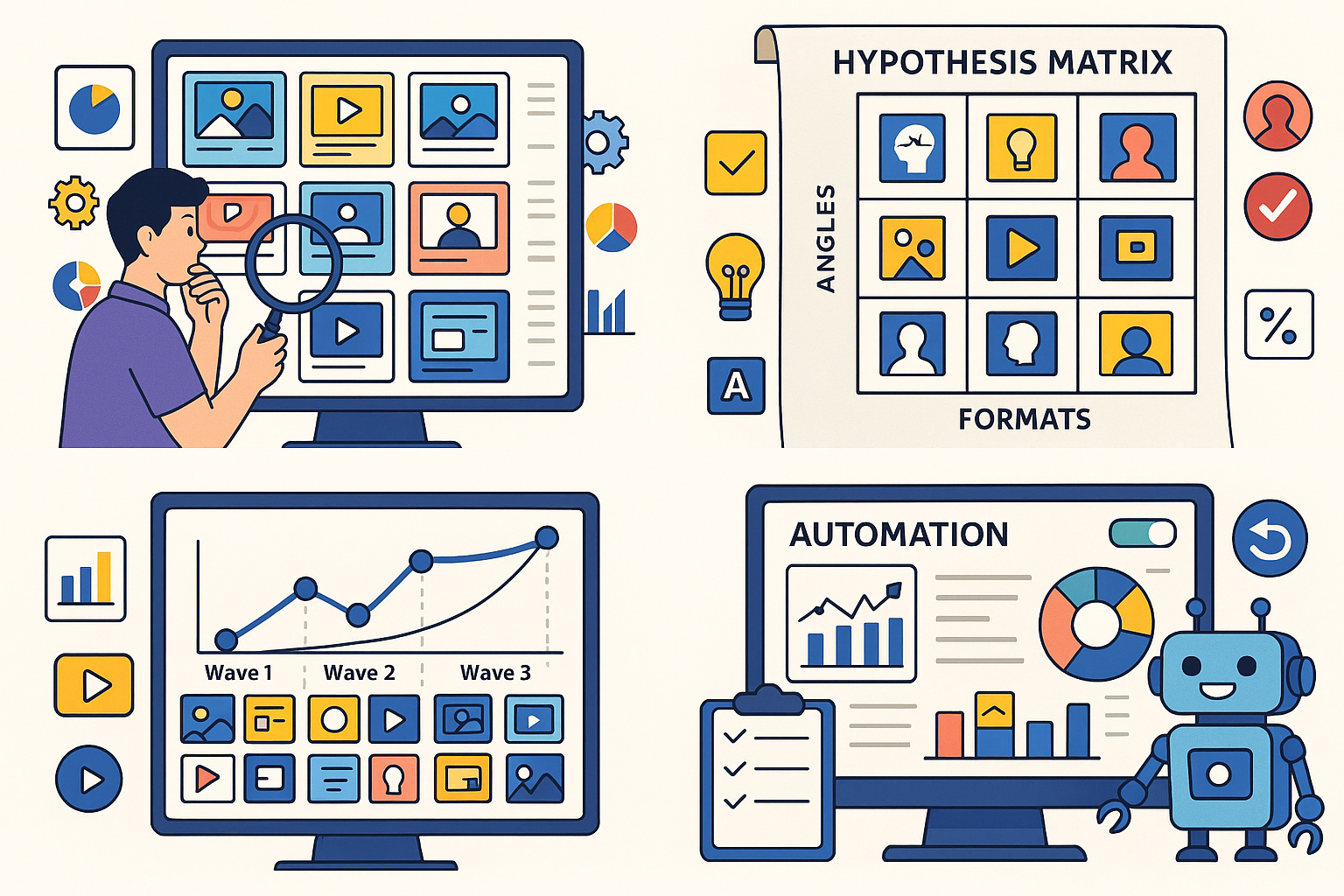

2. Build a Hypothesis Matrix Instead of Random Creatives

To avoid creating a pile of random assets, build a hypothesis matrix.

2.1. The axes of the matrix

At minimum, use three axes:

- Angle (how you frame the offer)

- Pain / fear

- Benefit / dream

- Social proof

- “Secret trick” / hack

- Urgency / scarcity

- Audience

- Beginner vs advanced

- Gender / age segments

- Interests / job / income level

- Format / visual style

- Static image

- UGC / selfie video / screencast

- Meme / collage / carousel

The intersections of those axes are creative clusters. For example, “Angle: pain + Audience: beginner + Format: UGC” is one cluster.

2.2. Why this matters

With a matrix, 100+ creatives are no longer “100 random banners” but rather 20–30 meaningful clusters with variations inside. When data starts coming in, you don’t just see one lucky image. You see which angle + audience + format combination drives the best CTR and conversion rate.

That gives you scalable insights instead of one-off lucky winners.

3. Campaign Structure: Order Instead of Chaos

The exact setup will differ between Facebook, Google, Native networks, push traffic, etc., but the logic is the same everywhere.

3.1. Separate the levels

- Campaign level – objective (purchases, leads, traffic), GEO, offer.

- Ad set / ad group level – audience, placements, optimization type, bid strategy.

- Ad level – the creative itself (visual + copy).

The rule of thumb: don’t test everything at once. For example:

- In one campaign – one GEO, one offer, one optimization goal.

- In one ad set – one audience or segment.

- Inside an ad set – many creatives.

That way, differences in performance are mostly caused by creatives, not by hidden differences in targeting or optimization.

3.2. Naming conventions

Without a clear naming system your account will look like an archeological site in a week.

Example scheme:

- Campaign:

GEO | Offer | Objective | Stage

e.g.US | KetoPills | Conversions | CreativeTest_V1 - Ad set / ad group:

AUD | Placement | Opt

e.g.Women25-44 | FB+IG Feed | LowestCost - Ad (creative):

Angle | Format | Ver

e.g.Pain_Back | UGC_Video | v3orDream_Slim | Static | v1

Duplicate the same information in UTM tags or tracker subs (for example, sub1=angle, sub2=format, sub3=version) so analysis is easy later.

4. Wave-Based Testing: Don’t Launch 100 Creatives at Once

Launching all 100+ creatives at once is almost always a bad idea: the algorithm will pick two or three favorites, most ads won’t get any impressions and the budget will be too thin to gather reliable data.

4.1. A practical wave approach

- Wave 1: Launch 20–30 creatives (for example 4–5 from each cluster).

- Collect data and choose the top 3–5 creatives in each cluster based on clear metrics.

- Wave 2: Create new variations based on those winners (change offer emphasis, CTA, color, thumbnail, hook, etc.).

- Repeat the cycle until you have:

- stable winners, and

- a list of angles and formats that clearly do not work.

Over several waves you will test 100+ creatives while your campaigns remain manageable and structured.

5. Statistical Thresholds and Stop Rules

How long should a creative run before you decide to keep or kill it? There is no magic number for every account, but you do need clear rules so decisions are not based on feelings.

Example framework for lead or purchase optimization:

- Minimum exposure

- 1,000–2,000 impressions to evaluate CTR, and

- 50–100 clicks to get a rough idea of conversion rate from click to lead/sale.

- Hard cost cap

- If an ad has spent between 0.5 and 1× your target CPA with zero conversions, turn it off.

- Relative underperformance

- If CTR or conversion rate of a creative is 30–50% worse than the ad set average, kill it even if the sample size is still small.

- “Potential” category

- If CTR is strong but conversions are low, keep the creative running with a small budget for another wave.

The key is to define your rules before the test starts and not adjust them later just to keep your favorite banners alive.

6. Cluster Analysis: Look for Patterns, Not Just Lucky Ads

After each wave, don’t just stare at the top five images. Look for patterns in your clusters.

What to analyze

- By angle – which pains or benefits drive the best CTR? Does fear (“you will lose…”) perform better than gain (“you will get…”)?

- By format – UGC vs studio video, static vs animation, memes vs serious visuals.

- By elements inside the creative – presence of a face, amount of text, colors, layout and so on.

Track these dimensions in a spreadsheet, Notion database or BI tool and calculate average CTR, CPC, CR, CPA and ROI for every cluster.

Then you can scale patterns, not just single ads. For example, if “women 25–45 + shame-based angle + UGC before/after video” consistently wins, you can create 10–20 new variations of that exact pattern for new GEOs and audiences.

7. Budget Management and Delivery Control

Even the best testing framework will fail if the ad network doesn’t give traffic to most of your creatives.

7.1. Getting more even delivery

- Limit the number of creatives per ad set. Five to ten ads per ad set is usually fine; forty is an almost guaranteed recipe for skewed delivery.

- Start with identical bids and budgets. Give all ads a fair chance before winners are selected.

- Use duplicate ad sets with different creative sets. For example:

- Ad set A → clusters 1 + 2

- Ad set B → clusters 3 + 4

This increases the chance that each cluster receives enough traffic.

7.2. Moving budget to winners

Once winners are clear, move them into a dedicated scaling ad set or campaign, gradually increase the budget and keep a smaller budget on the testing campaign where you continue running new waves.

This way you always have two engines running in parallel:

- a money engine (scaling your proven creatives), and

- a testing engine (searching for new angles and formats).

8. Automation: Don’t Live Inside Ads Manager

The more creatives you have, the more routine work appears. A minimal automation stack can save a lot of time:

- Auto rules in the ad platform – to pause ads with low CTR or too high cost and to increase budget for ad sets that beat your ROI target.

- Naming and UTM templates – define your structure once and then generate names and links via templates or scripts.

- Reports in a tracker or BI tool – focus on summaries by angle, format and element instead of scrolling through hundreds of individual ads.

- Bulk upload tools – use CSV/Excel templates, APIs or external tools to upload dozens of creatives at once.

9. Common Mistakes When Testing 100+ Creatives

- No hypothesis matrix. You end up with dozens of random images and zero insight into why something worked.

- Mixing everything together. Different GEOs, audiences, placements and objectives inside the same campaign make it impossible to know what really drives results.

- No clear stop rules. Some creatives are killed after 100 impressions, others burn 10× CPA “because I like this banner”.

- No dedicated winner cluster. People discover a good creative but leave it in the testing mess instead of moving it to a clean scaling setup.

- Ignoring traffic volume. Drawing strong conclusions from two or three conversions is almost the same as reading tea leaves.

10. Quick Checklist of the Framework

- Define the testing goal and build a hypothesis matrix (angles × audiences × formats).

- Design your campaign structure so creatives are tested on a stable background (same offer, GEO, objective and audience).

- Split testing into waves of 20–30 creatives instead of launching 100 at once.

- Set statistical thresholds and stop rules before you launch.

- Analyze results by clusters and patterns, not just by individual images.

- Move winners into dedicated scaling campaigns and keep a separate “sandbox” for new tests.

- Automate as much as possible: rules, naming, reporting and bulk uploads.

Testing 100+ creatives doesn’t have to turn your account into chaos. With a clear framework and strong discipline around structure, waves, and stop rules, creative testing becomes a predictable process that feeds your business with constant winners instead of random luck.